BotVisibility

BotVisibility

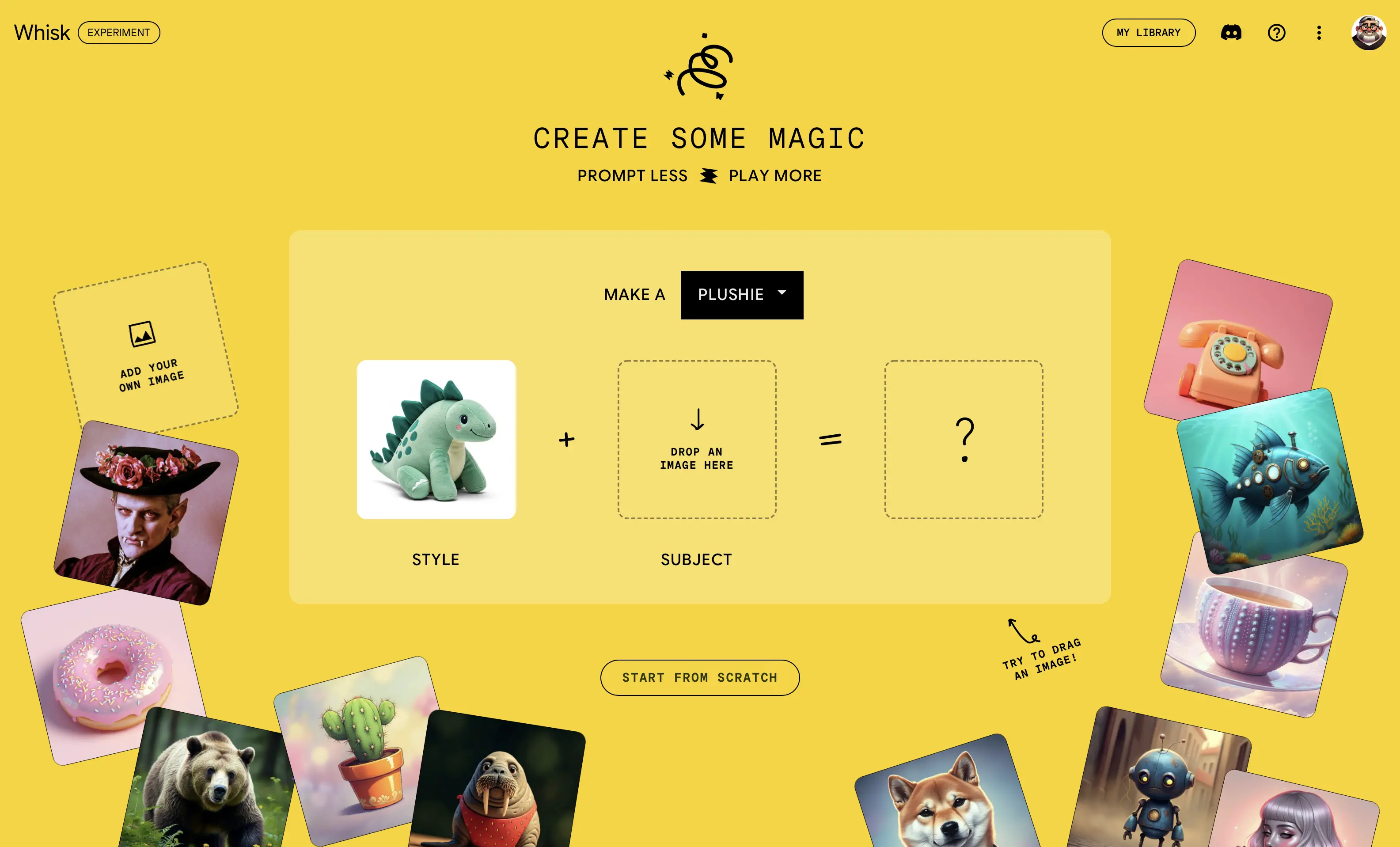

AI agents are visiting your site. Most sites fail the entrance exam.

Every week, more AI agents show up at your front door. They're trying to read your API, discover your capabilities, figure out how to work with you. And most websites hand them a blank stare. No llms.txt. No agent card. No OpenAPI spec. No CORS headers. Just raw HTML and a prayer.

I built BotVisibility to answer one question: how bad is it?

What it does

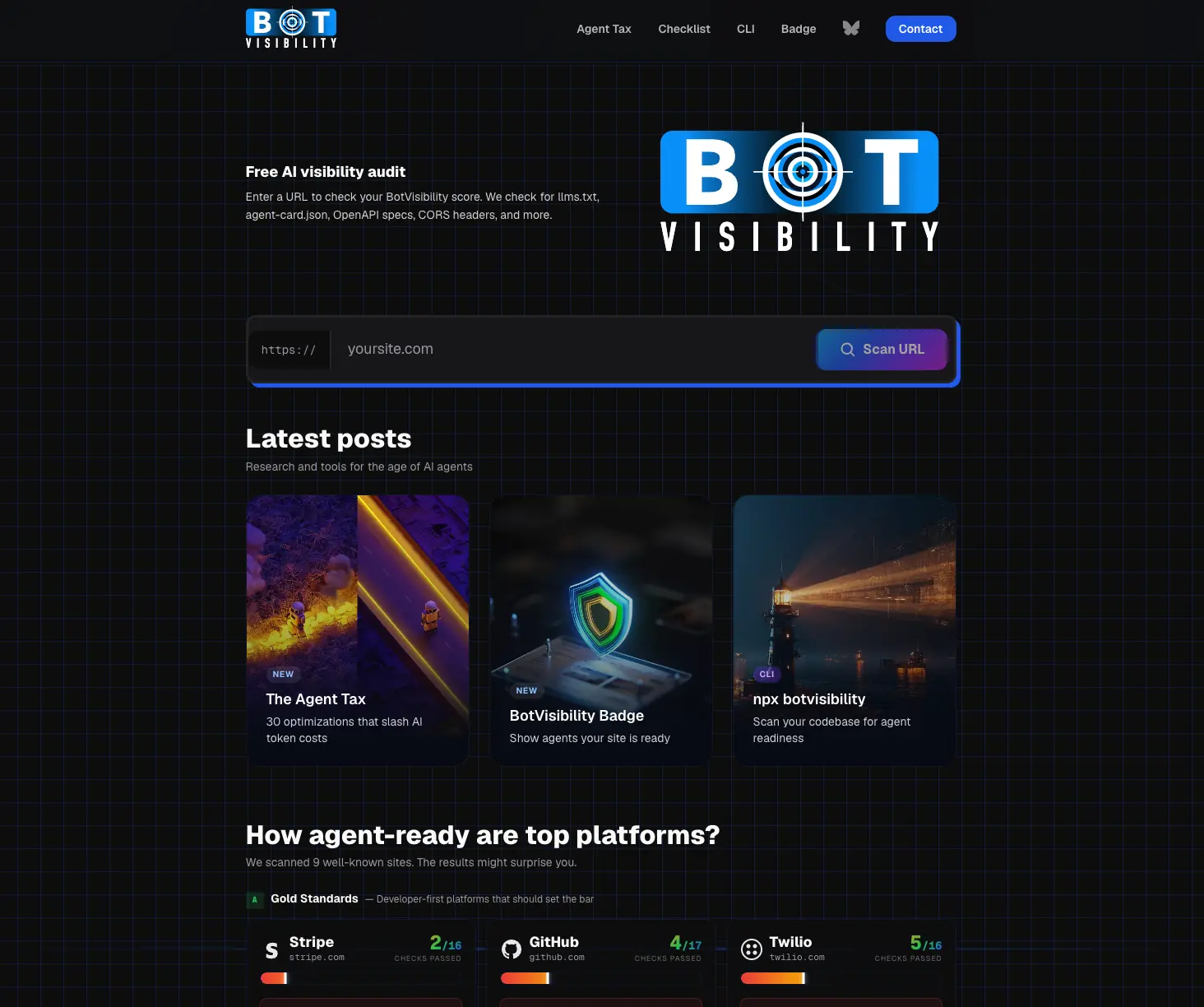

Enter a URL. Get a score. BotVisibility runs 37 automated checks across four progressive levels of AI agent readiness and tells you exactly where you stand. No signup. No credit card. Free.

L1: Discoverable — 14 checks. Can an agent even find you? That means llms.txt, agent-card.json, OpenAPI specs, robots.txt AI policy, CORS headers, AI meta tags, skill files, MCP server manifests, RSS feeds, and token efficiency. If agents can't discover you, nothing else matters.

L2: Usable — 9 checks. Once discovered, can agents actually work with your API? Read operations, write operations, the primary value action, API key auth, scoped keys, OpenID config, structured errors, async operations, idempotency support. Agents need predictable interfaces.

L3: Optimized — 7 checks. Is the agent experience efficient? Sparse fields, cursor pagination, search and filtering, bulk operations, rate limit headers, caching headers, MCP tool quality. These reduce the token cost of every interaction.

L4: Agent-Native — 7 checks. Is your system actually built for AI agents? Intent endpoints, agent session management, scoped tokens, audit logging. Level 4 requires the --repo flag because these patterns live in source code, not public HTTP responses.

The Agent Tax

Here's the number that matters. BotVisibility calculates what I'm calling the Agent Tax — the token overhead an AI agent pays because your site isn't structured for machines. A site with no agent infrastructure forces agents to reverse-engineer everything from HTML docs. That costs 10x to 100x more tokens than a structured spec.

We scanned nine well-known sites. Stripe passes 2 of 16 checks. GitHub passes 4 of 17. The New York Times hits a 100x Agent Tax — agents get zero guidance on content licensing or acceptable use. Chase.com forces banking agents to screen-scrape. That's a security nightmare dressed as a website.

The CLI

Like Lighthouse, but for AI agents. Zero install required.

npx botvisibility stripe.com

That's it. Instant agent-readiness report in your terminal. Works against any public URL. Want JSON output for your CI/CD pipeline? Add --json. Want to go deeper than HTTP scanning? Point --repo at your project root and BotVisibility pattern-matches your actual source code — JavaScript, TypeScript, Python, Go, Java, Ruby, PHP, whatever. The --repo flag unlocks Level 3 code checks and all of Level 4. It finds implementations that exist in your code but aren't yet exposed in public responses — pagination logic, bulk endpoints, agent session handling.

Want it permanent? npm install -g botvisibility.

CI/CD integration

The --json flag makes BotVisibility a gate in your deployment pipeline. Fail builds when agent readiness drops below your threshold. A GitHub Actions workflow takes about six lines. Check the score, fail if it's below Level 1, ship if it passes. Agent readiness as a first-class quality metric, same as accessibility or performance.

The badge

Pass enough checks and you earn a BotVisibility Badge — a signal to agents that your site is ready for them. Think of it as a green light for machine traffic. Here's this site's live badge, rescanned every hour:

Drop one line of HTML into your site and you get a live score badge that updates as you improve. The ?url= version runs a fresh scan each load — cached for one hour. Want a static badge that doesn't rescan? Use explicit parameters instead.

Why I built this

I already built my own site for agents. llms.txt, agent cards, MCP server, A2A protocol, the whole stack. But there was no way to benchmark it. No way to know if I was missing something. No way to compare against sites that should be leading the way.

So I built the tool I wanted. Then I pointed it at Stripe, GitHub, Shopify, Salesforce, and a few others. The results were embarrassing — for them.

Scoring

BotVisibility uses a weighted cross-level algorithm — not strict sequential gates. Strong performance at higher levels can compensate for gaps at lower ones. A site with great API design but a missing llms.txt can still score well. The algorithm rewards depth, not just breadth.

37 checks. Four levels. One number. botvisibility.com.

The agents are already here. The question is whether your site is ready for the conversation.